Integration | Agentic Workflows in Modern Software

- 6 min readHow Generative AI Continues to Reshape the Software Landscape

Software engineering has traditionally focused on turning a set of inputs into a predictable set of outputs. When users encountered bugs, logs and reports would make these issues reproducible. A patch would then restore expected behavior. Today, generative systems, such as agentic frameworks, diffusion models, and foundation models are reshaping software. New application boundaries are emerging that allow deterministic application flows to integrate generative capabilities seamlessly.

Once cutting-edge, generative AI is now widely expected by users and routinely deployed to production. From chatbots that handle customer queries, to code copilots that assist developers, to agentic orchestration frameworks that coordinate complex tasks, these capabilities are increasingly integrated into mature products that are agent-aware and capable of interfacing with modern AI tools.

Why does this shift demand our attention? In short, there is significant competitive advantage for products that seamlessly integrate AI solutions, as well as numerous potential pitfalls. Reliability can no longer be defined solely by “it compiles and passes our tests.” Testing now requires new approaches, user trust depends on correct handling of generative logic, and failures can no longer be easily traced.

Product solutions increasingly manage probabilistic, generative, and context-dependent logic. This calls for rethinking system design, updating metrics, refining error-handling strategies with robust fallbacks, and creating methods for aligning varied user experiences with product improvements. Let’s look at how this shift shapes engineering practices at a deeper level.

Embracing the Paradigm Shift

Historically, software systems were largely deterministic, with predictable outputs, explainable processes, and failures traceable to flawed assumptions. Logic was structured around binary states, a test either passed or failed, a server was either up or down, and a boolean flag often steered control flow. In that world, correctness was crisp and readily provable.

Modern applications, however, increasingly include components whose outputs reflect likelihoods rather than fixed states. How we reason about and compose software is evolving. Instead of thinking only in pass/fail terms, we might now consider how “correct” an output is or assess whether it meets a certain semantic threshold.

Engineers are adopting new methods such as:

- Probabilistic Testing: Sampling multiple potential outputs to assess variability in responses.

- Fuzzy Validation: Accepting results within a defined range of correctness rather than a single binary benchmark.

- Semantic Metrics: Gauging alignment with user intent, sometimes via natural language evaluation or embedding-based similarity checks.

- Trust Calibration: Determining when a model’s output can be safely deployed without human review, often informed by confidence scores or user feedback loops.

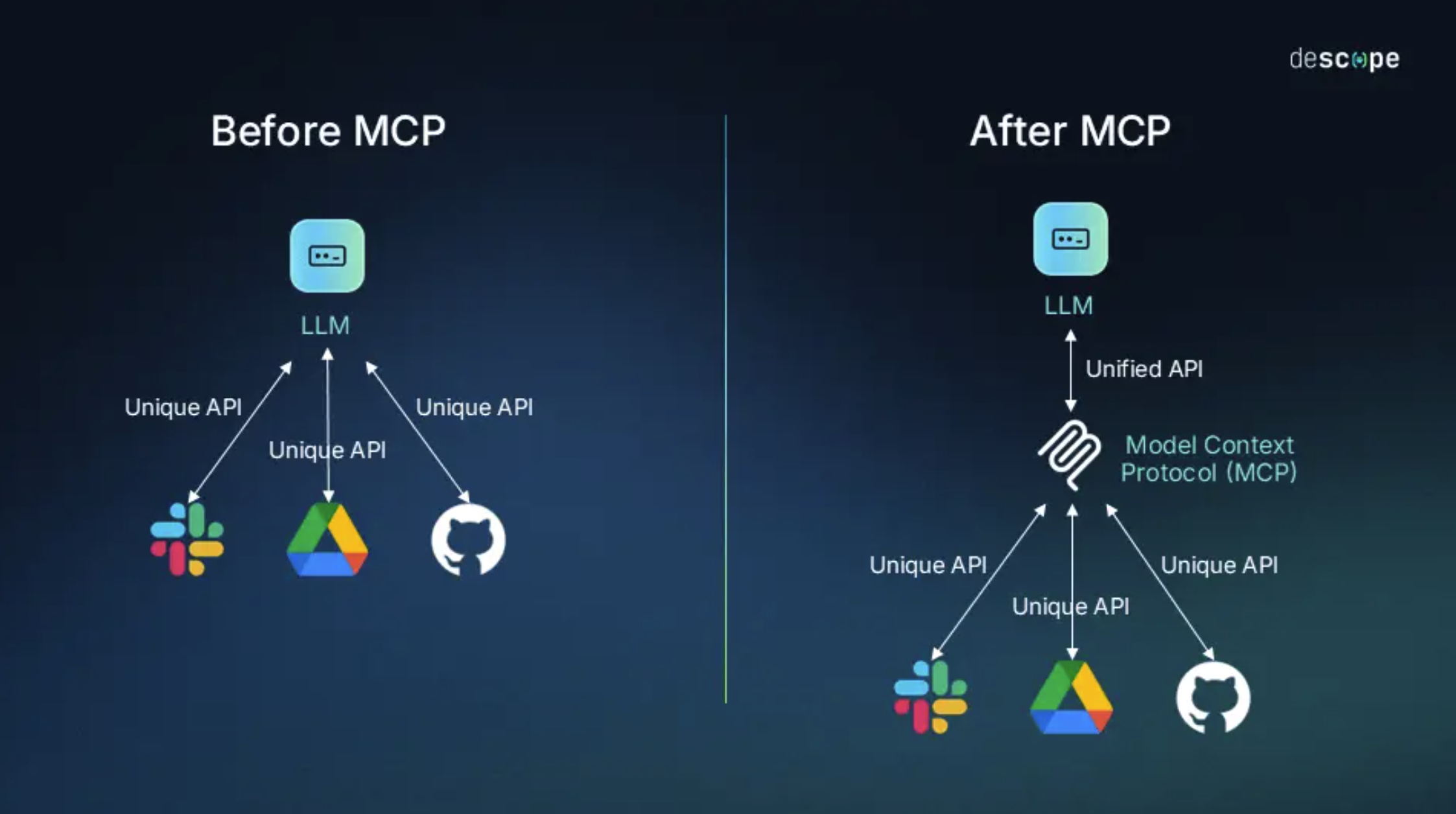

Model Context Protocol — Abstraction Layer/Gateway for Connector Sources.

Ref: descope

Emerging standards like the Model Context Protocol (MCP) aim to standardize how multiple AI agents process and exchange context. By defining a common language for inputs, outputs, and metadata, they allow diverse agents to collaborate more seamlessly, reducing the need for custom integrations. This ensures a modular design, where specialized AI modules tackle planning, execution, or evaluation tasks with consistent handoffs. However, adopting these standards may introduce security considerations and other overheads, especially in large-scale deployments. Over time, protocols like MCP promise to shape an interoperable AI ecosystem that lets agentic frameworks orchestrate services across application boundaries and external APIs with transparency and control.

Beyond these techniques, a deeper cultural change is underway. Teams now collaborate more closely with data scientists and domain experts to define “acceptable drift” or “confidence thresholds.” QA and DevOps processes are evolving to handle model versioning and real-time monitoring of AI-generated outputs. Successful engineering teams will treat probabilistic output as a first-class design constraint, drawing clear lines between where generative components belong and where deterministic safeguards must remain.

As AI solutions become more layered and complex, embracing this paradigm shift is essential for engineering reliable, trustworthy, and innovative software. Let’s consider how these methodologies translate into effectively integrating generative and agentic capabilities.

Integrating Generative and Agentic Systems

Modern software increasingly operates across two distinct layers. The first is the familiar realm of deterministic logic, crisp conditionals, and predictable data pipelines, a foundation that has long underpinned the discipline. The second, newer layer is fuzzier and probabilistic, driven by generative models, agentic orchestrators, and foundation model APIs that infer, suggest, or reason rather than merely execute, compute, render. This bifurcation is becoming ever more relevant for modern system design.

For teams embedding AI without full agentic workflows, focusing on a specific application surface, such as a plugin or extension can yield an improved mental model and reasoning heuristics. These components can be “boxed in” conceptually, with well-defined interfaces that help the broader application reason about inputs and outputs. Such an approach has spurred new design layers between boundaries of application inference and application control. A fallback or hybrid approach is one core example: if a model’s confidence score is low, the request reverts to a deterministic workflow for guaranteed results. While the fallback approach itself is not new, correctly evaluating the AI output in real-time is nuanced.

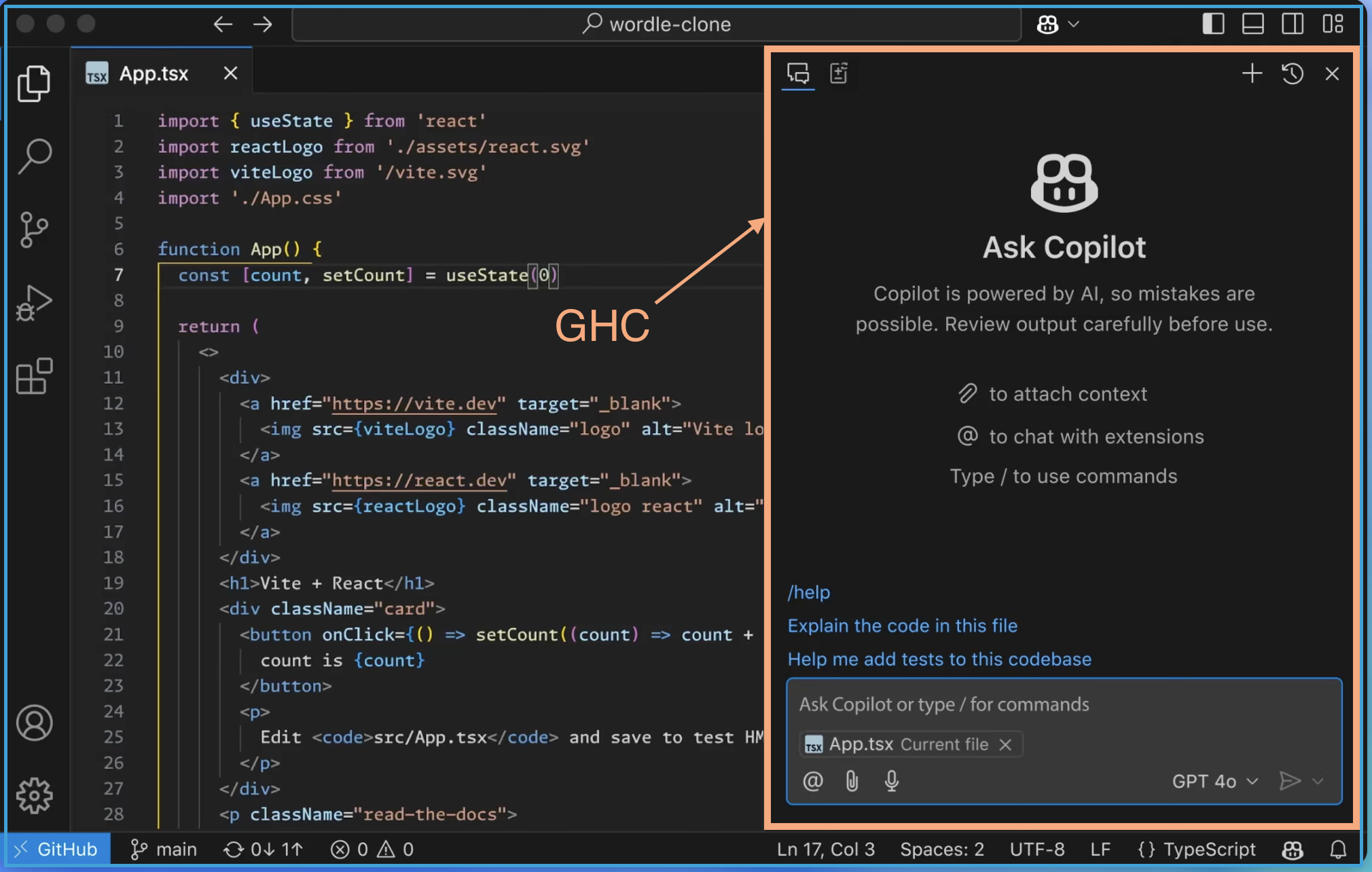

GitHub Copilot for Visual Studio Code, Extension Product Integration.

Ref: GitHub

GitHub Copilot (GHC) for Visual Studio Code illustrates the extension-based integration. The editor remains deterministic while including the extension. Visual Studio Code still saves files reliably, loads extensions predictably, and maintains a stable UI, while Copilot sits in an encapsulated generative module that reads the active editing context and proposes completions. Its access is limited to suggestion surfaces, retaining the editor’s expectations for how the program’s flow should operate.

However, as AI capabilities evolve, many teams are moving beyond surface integrations toward fully agentic workflows. In these setups, autonomous or semi-autonomous modules coordinate to accomplish higher-level goals. Rather than simply assisting within a fixed interface, they dynamically decide which tools to invoke, in what sequence, and how to adapt based on context. They often rely on “connectors” to external services, such as Microsoft Graph, Google Calendar, or Salesforce APIs, that act as action surfaces for tasks such as scheduling meetings, updating CRM records, or executing multi-step business workflows. Some Microsoft agentic frameworks include: AutoGen, Semantic Kernel, and Azure AI Agent Service.

Supporting these agentic workflows adds a new architectural layer that sits outside of traditional control flow, enabling adaptive orchestration across components. As a result, existing products may need to be refactored. Modules that once passed data linearly through a pipeline might now be tasked with sharing snapshots of internal states or support idempotent behaviors, allowing agentic systems to query, update, or coordinate them more intelligently. Meanwhile, new applications can be designed around this orchestration layer from the start, structuring core components to assume a goal-driven agent will coordinate services in real time.

Architectural patterns are already emerging to support this shift: tool-usage frameworks that separate planning from execution, protocols like MCP mentioned earlier, and sidecar agents that monitor and act alongside existing services. As the user experience shifts toward increasingly generative workflows, we’re blending static control flow with probabilistic inference layers, embedding reasoning surfaces into production, and managing trust and ambiguity at runtime. While these complexities introduce new challenges, they also raise the ceiling on the kinds of user experiences and intelligent capabilities we can design into software.